OpenAI has built the foundational layer of the AI industry. With large generative models like GPT-3 and DALL-E, OpenAI offers API access to businesses that want to develop applications on top of its foundational models while being able to plug these models into their products and customize these models with proprietary data and additional AI features. On the other hand, OpenAI also released ChatGPT — as explored in the intelligence factory race between AI labs — , developing around a freemium model. Microsoft also commercializes opener products through its commercial partnership.

How much does OpenAI make today?

We don’t know the revenues of OpenAI, yet the company projected a billion in revenues by 2024, and according to Form 990, which is IRS’ primary tool for gathering information about tax-exempt organizations, OpenAI reported a $3.48 million in revenues in 2020.

This was the year when GPT-3 had been released, so we might assume that a good chunk of it was for the release of its APIs. It’s mostly speculation, which will need to be confirmed once/and if OpenAI LP will start releasing its financials.

Source propublica.org

OpenAI APIs

OpenAI monetized its generative models through its APIs, which can be accessed by any business to build new applications on top of these APIs.

As explained, the three layers of AI, the middle layer of AI, and the business applications layer of AI are leveraging these APIs to build verticalized engines that can perform specific functions much better than a generalized engine like GPT-3.

And on the other hand, the business applications layer can leverage the APIs to make any app smarter via AI.

Examples of how OpenAI’s APIs have been used so far at the consumer applications level comprise tools and products like GitHub Copilot and more.

ChatGPT

With the release of ChatGPT, OpenAI has expanded its consumer reach for its family of GPT models.

Indeed, pre-ChatGPT, these models could be used either via the OpenAI site or as small applications which were available through the site.

Yet, those were primarily a way to showcase the capabilities of OpenAI APIs.

With the release of ChatGPT, the whole OpenAI business model changed, and it moved from primarily B2B to a consumer-based business model.

Indeed, ChatGPT has reached millions of users since its launch, and it’s now becoming a tool, like Google, that has captured the imagination of hundreds of millions of users.

ChatGPT leverages GPT underlying models, yet, it also uses InstructGPT models, as a way, via humans-in-the-loop, to fine-tune the capabilities of the conversational interface to get better at handling complex conversations and interactions.

ChatGPT is primarily available for free on the OpenAI website, and it might also be getting released as a premium version.

ChatGPT works a premium model, where the tool has been opened for paid users at a $20/mo plan.

In addition, in the future, ChatGPT might also be monetized via API access.

ChatGPT has been growing at an astounding rate.

To understand the difference between ChatGPT and GPT, you need to grasp how InstructGPT works.

ChatGPT Premium

ChatGPT is available as a premium version that can be accessed for $20/mo, enabling the user to get a faster version that performs better.

The functionalities are the same as the free version; what changes is speed, performance, and ability to have tasks completed with more tokens, compared to the free version, which might stop after a specific token request.

For instance, in the free version, if you ask ChatGPT to formulate a very long essay, that might stop at a certain point, whereas in the premium one, it should have no specific limits.

ChatGPT APIs

ChatGPT was also launched as an API endpoint.

Meaning it can be integrated via its APIs into any web application.

As OpenAI explained:

The ChatGPT model family we are releasing today, gpt-3.5-turbo, is the same model used in the ChatGPT product. It is priced at $0.002 per 1k tokens, which is 10x cheaper than our existing GPT-3.5 models. It’s also our best model for many non-chat use cases—we’ve seen early testers migrate from text-davinci-003 to gpt-3.5-turbo with only a small amount of adjustment needed to their prompts.

As OpenAI explains in its documentation, ChatGPT is powered by gpt-3.5-turbo, OpenAI’s most advanced language model.

Using the OpenAI API, you can build your applications with gpt-3.5-turbo to do things like:

- Draft an email or other piece of writing

- Write Python code

- Answer questions about a set of documents

- Create conversational agents

- Give your software a natural language interface

- Tutor in a range of subjects

- Translate languages

- Simulate characters for video games and much more

Foundry

OpenAI launched an enterprise service called Foundry, which is simply an expansion of the API service, but for enterprise clients to enable them to have a more solid infrastructure — as explored in the economics of AI compute infrastructure — with a broader volume.

As explained by TechCrunch a lightweight version of GPT-3.5 will cost $78,000 for a three-month commitment or $264,000 over a one-year commitment.

OpenAI/Microsoft

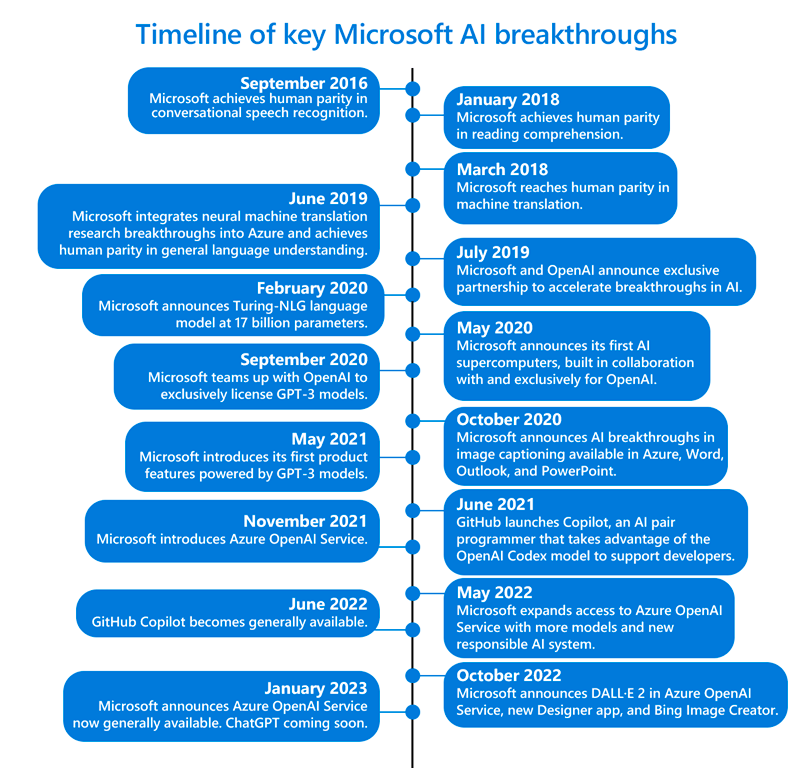

OpenAI and Microsoft partnered up from a commercial standpoint. I explained the history of the partnership, which started in 2016 and consolidated in 2019.

It’s now taking a leap forward, with Microsoft that put a few more billion into the partnership, with multi-year commercial agreements, where Microsoft keeps providing the infrastructure needed for OpenAI to grow while, Microsoft gets a commercial partnership to distribute and integrate OpenAI’s products!

How is Microsoft benefiting from it?

And what’s the business model followed by this partnership?

Main Investor in the OpenAI LP

Microsoft is the main investor in the OpenAI LP, which is a for-profit, yet capped entity, created by the OpenAI foundation to gather capital from the private sector.

In 2019, the partnership strengthened, with Microsoft putting a billion dollars into the partnership.

This gave Microsoft various advantages.

Microsoft Azure AI Supercomputer

Microsoft’s business model is quite diversified.

And yet, Microsoft Azure, which is the cloud business unit, is a key element for that business model.

Today, Azure is among the top players in the cloud industry, together with Amazon AWS.

In the last decade, cloud providers have enabled hundreds of startups. It’s not a secret that AWS, by the early 2010s, empowered a class of startups that later became prominent tech companies (Netflix, Uber, Slack and many others).

Yet, from the 2020s, in the future, the cloud industry playfield has changed, and it’s skewed toward AI.

In other words, large generative models, like GPT-3 and forward, to be released in the first place, need a huge amount of computational power, which today can be provided only by a few players.

Differentiating a cloud business to become the underlying infrastructure for AI is a critical component to ensure the cloud offering doesn’t get commoditized over time.

In fact, cloud players like Microsoft and Amazon have enjoyed high-profit margins in the last years, thanks to the lack of competition and their massive infrastructure.

Yet, in the next decade, with AI models taking over the world, cloud computing might get commoditized.

Unless you develop it around AI. Which is what Microsoft is doing via its Azure AI Supercomputing technology.

In other words, the partnership between OpenAI and Microsoft, since 2016, has given Microsoft Azure’s team an important sight into how to build supercomputers that could help AI companies to build custom AI models.

This is the first key strategic advantage that Microsoft has gained from its partnership with OpenAI.

The development of its cloud AI infrastructure.

Microsoft Azure Enterprise Platform

In addition to that, Microsoft Azure is integrating all OpenAI’s products within its business and enterprise platform.

This integration enables Azure to become the go-to enterprise platform for the development of custom AI models, which can be integrated into the workflow of any business.

Integration into Microsoft Business And Consumer Applications

Another key benefit of the partnership between Microsoft and OpenAI is the integration of these AI models within Microsoft’s business and consumer applications products.

Some examples comprise the release of GitHub Copilot, an AI-based tool to write code much faster by leveraging GPT-3.

Or the fact that Microsoft is looking into ways to integrate these AI models within its other products, like Bing and Office.

Another large bet Microsoft might make is about integrating these AI models within its AR interfaces (HoloLens) as general-purpose assistants that can make those devices much more valuable.

The AI App Store?

By combining AI as an interface with a hardware device like HoloLens, and an operating system, like Windows Holographic OS, Microsoft and OpenAI might come up with the next App Store for AR?

How does OpenAI corporate and organizational structure work?

Key Highlights

- OpenAI’s Foundational Role in AI Industry:

- OpenAI has developed large generative models like GPT-3 and DALL-E.

- These models are made accessible through APIs, enabling businesses to build applications on top of them.

- OpenAI allows customization of these models with proprietary data and additional AI features.

- Revenue and Financials:

- OpenAI’s exact revenue is not publicly known.

- In 2020, it reported $3.48 million in revenues, with a significant portion potentially attributed to API access after GPT-3’s release.

- OpenAI projected reaching a billion in revenues by 2024.

- OpenAI APIs:

- OpenAI offers APIs for businesses to integrate generative models into various applications.

- These APIs serve as the foundational layer for creating verticalized AI engines and enhancing consumer applications.

- ChatGPT:

- ChatGPT expanded OpenAI’s consumer reach, shifting its model from primarily B2B to a consumer-focused approach.

- Millions of users have engaged with ChatGPT.

- It leverages both GPT and InstructGPT models to handle complex conversations.

- ChatGPT Premium:

- ChatGPT is available in a premium version for $20/mo, offering better speed and performance.

- The premium version allows tasks to be completed with more tokens compared to the free version.

- ChatGPT APIs:

- ChatGPT APIs, powered by gpt-3.5-turbo, enable integration into web applications.

- Pricing is $0.002 per 1k tokens, making it more cost-effective.

- OpenAI Foundry:

- OpenAI’s enterprise service Foundry extends the API service to enterprise clients.

- Foundry offers a range of pricing options based on commitment length.

- OpenAI and Microsoft Partnership:

- Microsoft invested in OpenAI and became a commercial partner.

- Microsoft Azure AI Supercomputer was developed as a result of this partnership.

- Azure integrates OpenAI’s products and models, strengthening its AI infrastructure.

- OpenAI’s models are integrated into Microsoft’s business and consumer products.

- Corporate Structure:

- OpenAI has two main entities: OpenAI, Inc., and OpenAI LP.

- OpenAI, Inc. is controlled by the OpenAI non-profit foundation and acts as a General Partner for OpenAI LP.

- OpenAI LP includes Limited Partners like employees, board members, investors (e.g., Microsoft), and foundations.

Read Next: History of OpenAI, AI Business Models, AI Economy.

Connected Business Model Analyses

AI Paradigm

Stability AI Ecosystem