This analysis is part of Amazon’s AI Business Model Pivot, a deep dive by The Business Engineer.

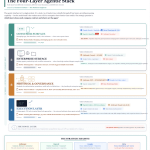

At re:Invent 2025, AWS unveiled a comprehensive four-layer agent technology stack that represents its most ambitious platform play since the original cloud infrastructure offering.

Layer 1: Foundation

The base layer includes Amazon Nova 2 models (the Nova 2 family launched at re:Invent), Trainium2 custom silicon (500K chips in Project Rainier, scaling to 1M), and a $200B backlog with “unannounced October deals exceeding all Q3 deal volume.” The Anthropic partnership adds Claude as a key model available through Bedrock.

Layer 2: AgentCore Infrastructure

AgentCore is the governance and control plane—described internally as “Kubernetes for agents.” It includes Runtime (framework-agnostic deployment), Policy (Cedar-based guardrails), Memory (episodic context persistence across sessions), and Evaluations (pre-production testing and validation).

Layer 3: Frontier Agents

AWS launched specialized AI workers: Kiro codes autonomously for days, Security Agent performs penetration testing and vulnerability scanning, DevOps Agent manages incident response and root cause analysis (already deployed at Commonwealth Bank), and Connect handles $1B ARR worth of AI contact center interactions.

Layer 4: Integration

The top layer connects agents to the world through Model Context Protocol (MCP), Powers Extensions with Figma and Netlify partnerships, GitHub integration for Kiro, and Visa Agentic Commerce in AWS Marketplace.

The strategic insight: AWS is building the “full stack for agents”—from silicon to specialized workers. Each layer creates lock-in. Together, they create an agentic moat.